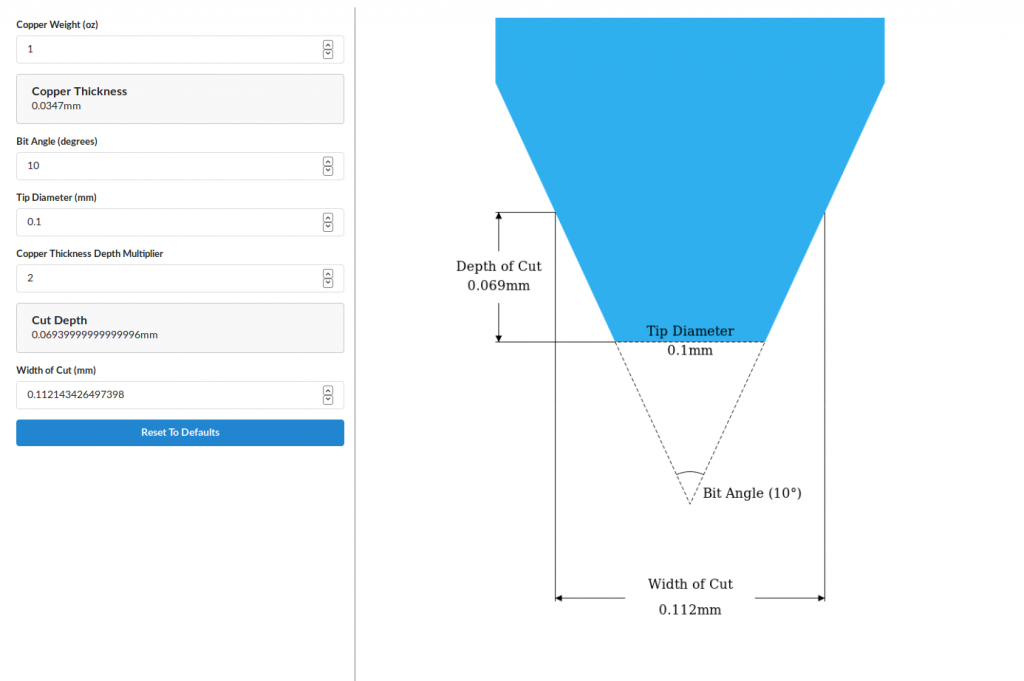

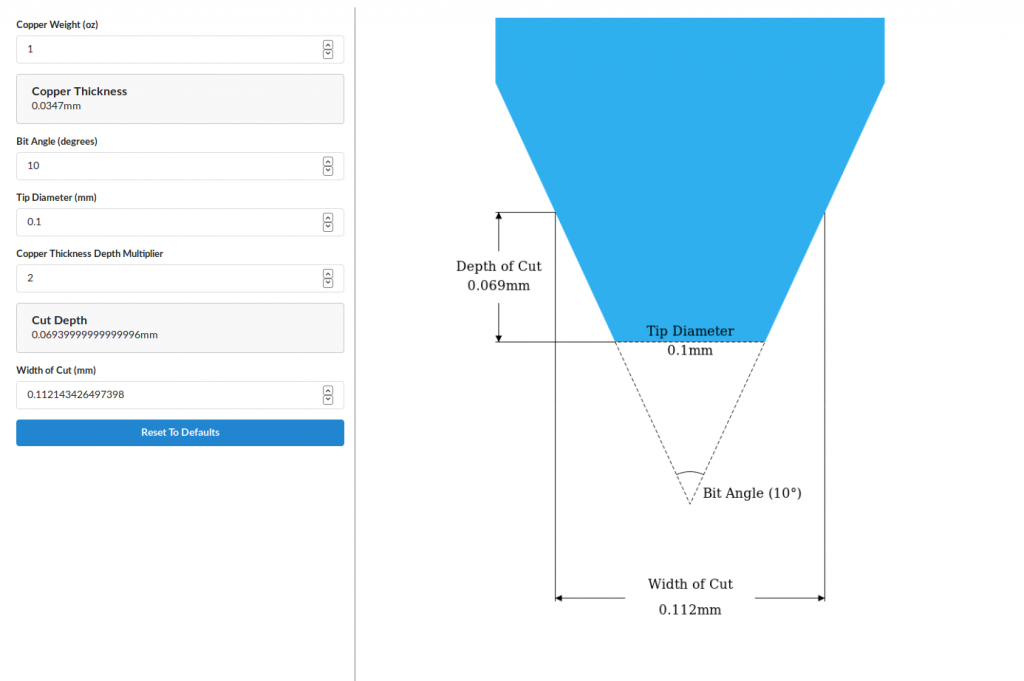

I’ve published a utility for calculating the cut depth and cut width when using V-shaped bits to engrave a PCB.

The live-action implementation can be found here.

I’ve published a utility for calculating the cut depth and cut width when using V-shaped bits to engrave a PCB.

The live-action implementation can be found here.

I just finished a program and a library to use an XBox 360 Chatpad as a Linux keyboard using the input sub-system. This means it acts exactly like a native keyboard and works with even framebuffer terminals. I’ve used it on my laptop with a USB serial adapter and on a Raspberry Pi.

Usage and more details can be found on the Github page here: https://github.com/timstableford/chatpad-linux

Just as a warning for some reason one of my USB serial adapters didn’t work properly with the keyboard and I had to try another.

Raspberry Pi Zero’s come equipped with a USB OTG port which means they can act as a USB client, such as in this case becoming a network adapter. There are quite a few articles on configuring the Pi to do this but most of them are based on Raspbian Jessie rather than Raspbian Stretch. There’s only a small difference but it’s not well documented really.

This guide will cover:

This guide also assumes some familiarity with the Linux console and Ubuntu.

This is a simple enough step but somewhat depends on the host machine and your preferences. I used the minimal Raspbian Stretch image from here. You might choose to use the full version but keep in mind this guide will only work with a version of Raspbian Stretch.

Unzip the downloaded image with your archiver GUI or…

(cd ~/Downloads && unzip *-raspbian*.zip)

Flash the image to your SD card (beware of DD the data destroyer when using this):

sudo dd if=$(ls ~/Downloads/*raspbian-stretch-*.img) of=/dev/mmcblk0

All done, now make sure to type sync in your terminal then remove the SD card and put it back in again.

With your GUI file manager click on boot and rootfs to mount them if they haven’t been already. Then open up cmdline.txt in the boot partition and add modules-load=dwc2,g_ether at the end of the line after rootwait.

Now open up config.txt in the boot partition and add dtoverlay=dwc2 to the end of the file.

Make sure those are saved and then we’ll do the complicated part.

This next part is where it differs between Jessie and Stretch in newer releases you need to modify /etc/dhcpcd.conf and in older releases /etc/network/interfaces, this will only cover the former.

So next we need to append the following network configuration to /etc/dhcpcd.conf:

interface usb0 static ip_address=192.168.137.2 static routers=192.168.137.1

The reason the above chooses 192.168.137.0 is because that’s the default IP range used by Windows Internet Connection Sharing, making this also compatible with Windows.

This is a bit tricky because only root can write the filesystem, so to get round this, run the following:

export INTERFACES=/media/${USER}/rootfs/etc/dhcpcd.conf

cat << EOF | sudo tee --append ${INTERFACES}

interface usb0

static ip_address=192.168.137.2

static routers=192.168.137.1

EOF

Lastly eject the rootfs and boot partitions using your file manager, and then the SD card is all setup.

First we need to configure the network with a static IP.

IPv4 settings.Automatic (DHCP) to Manual.192.168.137.1 and the subnet 255.255.255.0 and leave the gateway blank.At this point connect the Pi to the computer via the USB OTG port and wait patiently for it to boot up, when it has it should automatically connect to the PiStatic network. At this point you should be able to ping/ssh into the Pi using:

ssh pi@192.168.137.2

(the default password is raspberry)

Now the last thing to do is enable forwarding your computers internet connection. In the console run ifconfig to find out the name of your internet connected interface it may be eth0 or something more obscure like enp0s31f6.

Then run the following commands (replace eth0 with your network interface):

sudo sysctl net.ipv4.ip_forward=1 sudo iptables -A FORWARD -o eth0 -j ACCEPT sudo iptables -A FORWARD -m state --state ESTABLISHED,RELATED -i eth0 -j ACCEPT sudo iptables -t nat -A POSTROUTING -o eth0 -j MASQUERADE

The forwarding will not persist across reboots so keep a note of those commands, it’s possible to make it permanent but it is a security vulnerability.

You should now be able to SSH into the Pi and ping external sites such as google.co.uk.

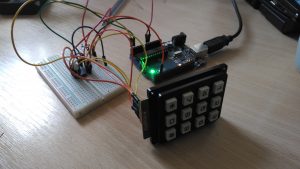

Interfacing with a keypad takes a lot of IO doesn’t it? There isn’t really space to do much else on an Arduino of ESP8266 after having 7 or 8 pins taken for a keypad. Luckily there’s a cheap solution for this one, using an I2C I/O expander. In this case the PCF8574AP· The ‘A’ being the alternate address version.

The first part to do, if it’s not supplied with your keypad is to figure out which pins connect to which keys. This is nearly always done in a grid configuration with rows and columns. To read this configuration you set all the pins to high/input-pullup except for a single column and then see which row is pulled low.

To figure out my keypad layout I attached a multi-meter to each of the pins on the keypad while in buzzer mode and noted which pins correlated to which button. Then it’s just a matter of finding the common theme and making your grid as below.

Most keypads don’t tend to have such a complicated pin-out and will often group the columns together.

Next step is to wire it all together, for this you will need:

Then wire it up to the PCF8574 as follows:

| PCF8574 Pin | Arduino Pin | Keypad Pin |

|---|---|---|

| SDA/15 | A4 (and 5V through 4.7k resistor) | |

| SCL/14 | A5 (and 5V through 4.7k resistor) | |

| INT/13 | 2 | |

| VDD/16 | 5V | |

| VSS/8 | GND | |

| INT/13 | 2 | |

| P[0..6] | [0..6] |

After that it should like something like the following:

Then the last step, the code:

#include <Arduino.h>

#include <Wire.h>

// This is the address for the 'A' variant with all address pins not connected (high).

// Check the datasheet for other addresses. http://www.ti.com/lit/ml/scyb031/scyb031.pdf

#define PCF_ADDR (0x27)

#define INTERRUPT_PIN (2)

#define DEBOUNCE_DELAY (200) // milliseconds

bool keyPressed = false;

int32_t lastPress = 0;

// Interrupt called when the PCF interrupt is fired.

void keypress() {

if (millis() - lastPress > DEBOUNCE_DELAY) {

keyPressed = true;

}

}

// The pins for each of the columns in the matrix below. P7 to P0.

uint8_t kColumns[] = { B00000100, B00000001, B00010000 };

uint8_t kRows[] = { B00000010, B01000000, B00100000, B00001000 };

char kMap[][3] = {

{ '1', '2', '3'},

{ '4', '5', '6'},

{ '7', '8', '9'},

{ '*', '0', '#'}

};

// This is the value which is sent to the chip to listen for all

// key presses without checking each column.

uint8_t intMask = 0;

// The PCF chip only has one I2C address, writing a byte to it sets the state of the

// outputs. If 1 is written then they're pulled up with a weak resistor, if 0

// is written then a transistor strongly pulls it down. This means if connecting an LED

// it's best to have the chip as the ground terminal.

// If passing a byte value to this, eg B11111111 the left most bit is P7, the right

// most P0.

void write8(uint8_t val) {

int error = 0;

// This will block indefinitely until it works. This would be better done with a

// timeout, but this is simpler. If the I2C bus isn't noisy then this isn't necessary.

do {

Wire.beginTransmission(PCF_ADDR);

Wire.write(val);

error = Wire.endTransmission();

} while(error);

}

uint8_t read8() {

uint8_t read = 0;

do {

read = Wire.requestFrom(PCF_ADDR, 1);

if (read == 1) {

return Wire.read();

}

} while (read != 1);

}

void setup() {

Serial.begin(115200);

Wire.begin();

// When a pin state changed on the PCF it pulls its interrupt pin low.

// It's an open drain so an input pullup is necessary.

pinMode(INTERRUPT_PIN, INPUT_PULLUP);

attachInterrupt(digitalPinToInterrupt(INTERRUPT_PIN), keypress, FALLING);

// Calculate the interrupt mask described above.

for (uint8_t c = 0; c < sizeof(kColumns) / sizeof(uint8_t); c++) {

intMask |= kColumns;

}

intMask = ~intMask;

write8(intMask);

}

// This goes through each column and checks to see if a row is pulled low.

char getKey() {

for (uint8_t c = 0; c < sizeof(kColumns) / sizeof(uint8_t); c++) {

// Write everything high except the current column, pull that low.

write8(~kColumns);

uint8_t val = read8();

// Check if any of the columns have been pulled low.

for (uint8_t r = 0; r < sizeof(kRows) / sizeof(uint8_t); r++) {

if (~val & kRows[r]) {

return kMap[r];

}

}

}

return '\0';

}

void loop() {

if (keyPressed) {

char key = getKey();

// Key may not be read correctly when the key is released so check the value.

if (key != '\0') {

Serial.println(key);

lastPress = millis();

}

keyPressed = false;

}

// After getKey this needs to be called to reset the pins to input pullups.

write8(intMask);

}

When uploaded and everything wired together the sketch will output the pressed key to the serial console.

Edited 3/12/2018: Updated CPP code to compile properly.

Following on from my previous post, so what if I now want to make a nice client side, but web-based application for playing videos. We can avoid using HTML for this purpose because we don’t need any search engine indexing, this means we get to use HTML5 canvas’s and a UI framework to speed up development.

Zebkit happens to be my favourite Javascript Canvas UI framework, it’s pretty quick to develop with and has most of the features of a standard desktop UI framework. What I found though is the video streaming is a bit lackluster, sure the support is technically in there but it made me long for the wonderful on-screen controls of the HTML5 video tag.